Research Showcase 2026 Was Abuzz with Cutting-Edge Projects and Collaborations

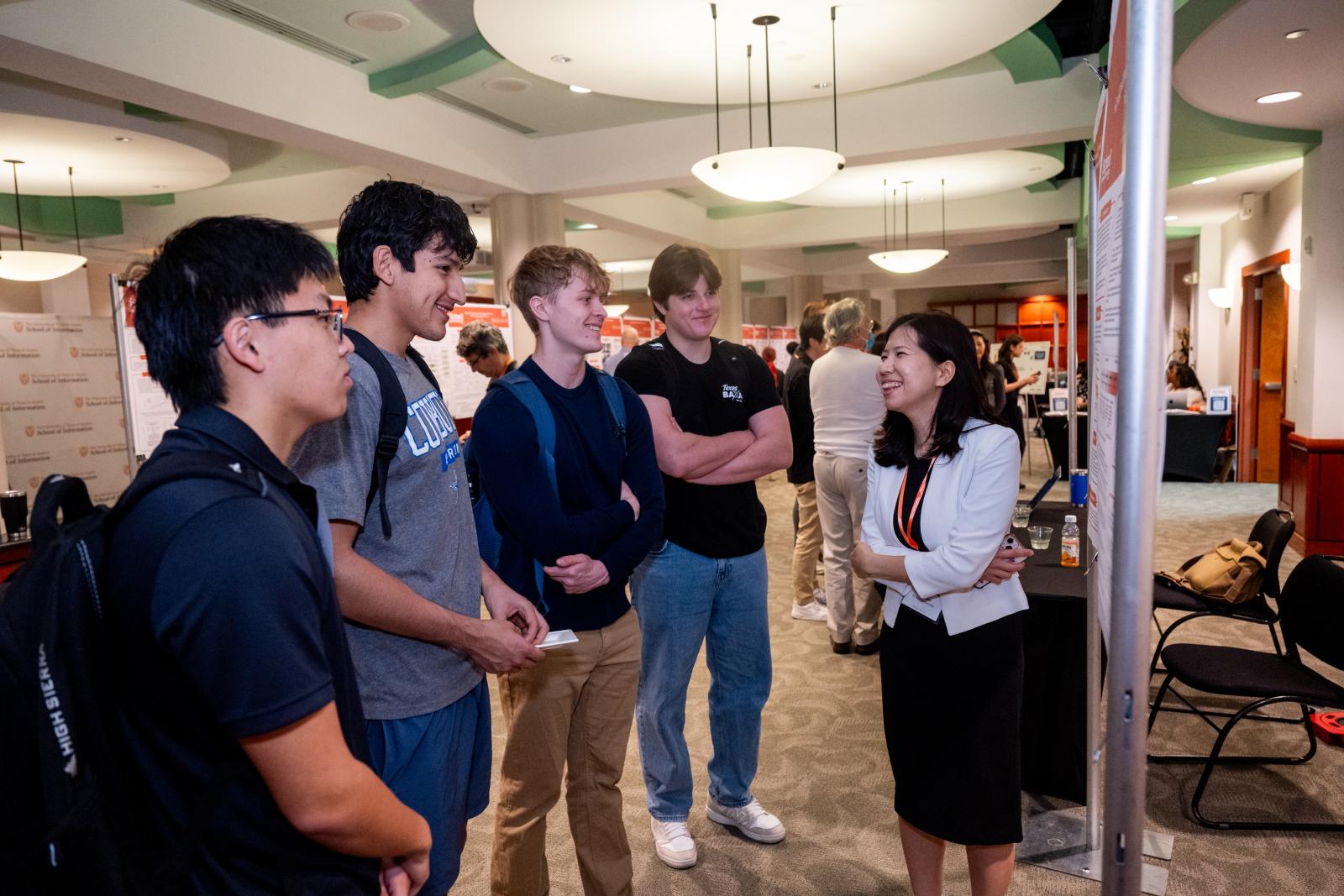

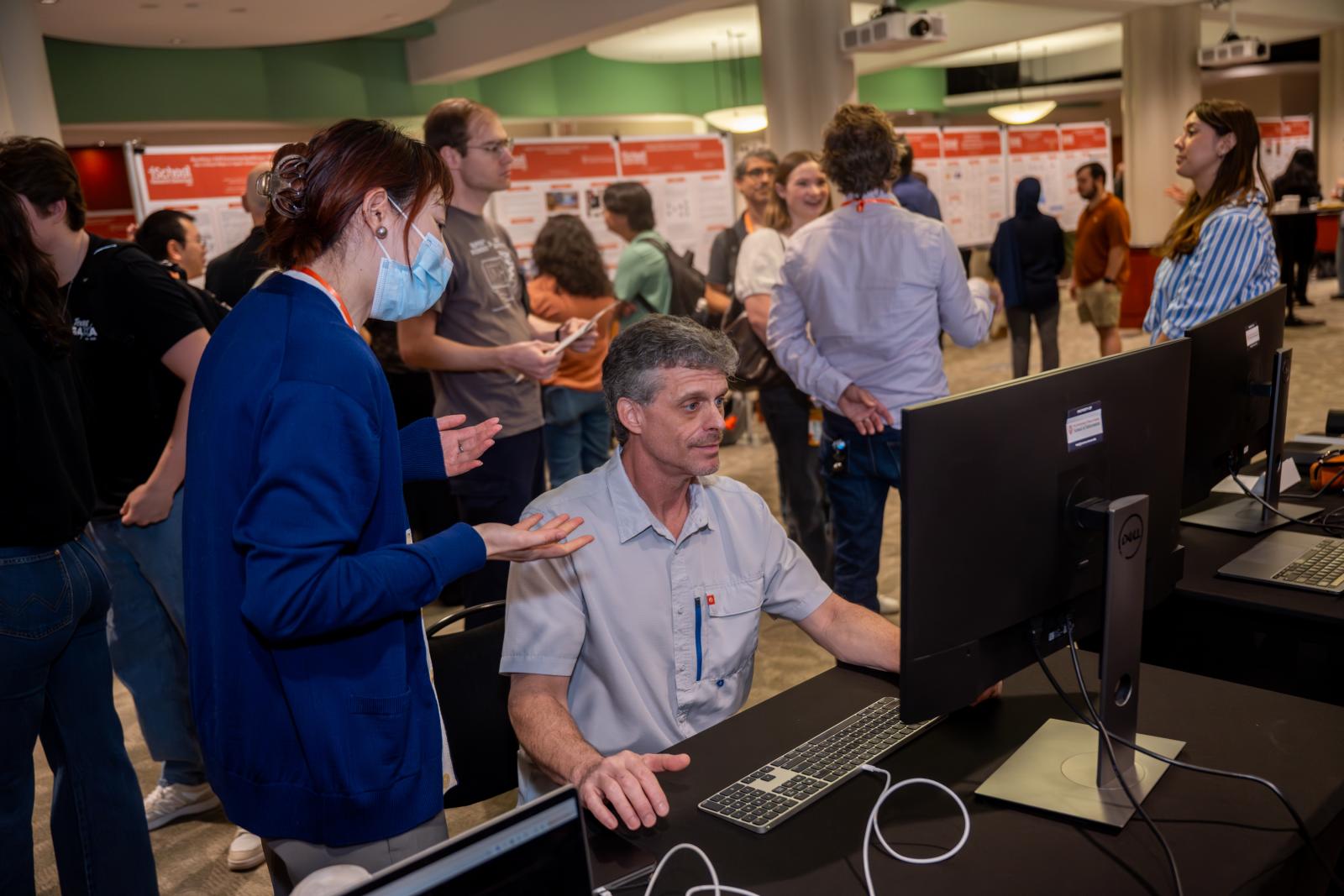

The iSchool invited scholars and visitors into a hive of research energy last Friday Feb. 27, as doors opened on the annual Research Showcase event at the Texas Union on UT Campus. For those in attendance, three hours was scarcely enough time take in the dizzying depth and breadth of all the research projects on display. Still, a unifying narrative emerged: The iSchool’s researchers are forward-looking, collaborative, and focused not just on substantive findings but also on effective communication of what their work means and why it matters.

The morning faculty research panel in the Quadrangle Room, “Computational Social Science and Critical Perspectives on Technology and AI,” moderated by Dr. Matthew Lease and featuring professors Hanlin Li, Angela D.R. Smith, and Nathan DeBlunthuis, set a tone of serious inquiry into emerging problems and topics. All three shared tales of their own projects, from Li’s work infilling Wikidata’s information repositories to Smith’s research on gamer communities modifying popular video games to DeBlunthuis’s study of how red flags are assigned to questionable Wikipedia edits.

All three professors raised concerns about the current structure of large language models and generative artificial intelligence, as well as ideas for how these systems might be better designed. DeBlunthuis, suggested a community-centric approach to critiquing AI.

“Instead of just thinking about ‘Is this user being hurt or helped by technology?,’ I'm asking, ‘Is this community better able to operate according to its values with this technology?” he said.

Li, discussing her work with Wikidata, made the hopeful prediction that AI algorithms may evolve to be more sensitive to inputs and adjustments from experts and institutions that are guardians of specialized knowledge. “The next question is how to involve knowledge creators in the LLMs, so their feedback is received,” she said.

After the early panel, the Santa Rita Suite exhibition hall next door opened for poster presentations and demonstrations. Projects presented ran the gamut of the various specialties under the iSchool umbrella, including user experience and design, cybersecurity, library and archives, health informatics, and accessibility in technology. Several took on key hot-button issues of our day.

One such project was “Do AI Copilots Support Learning? An Eye-Tracking Study of Attention and Difficulty,” a study at the iSchool’s IX Lab led by Gavindya Jayawardena, Dan Zhang, Kai-Yu (Kelly) Chang, Cecelia Albright, Hsin-Yu (Joyce) Chang, Pranati Kompella, and Jacek Gwizdka. The inquiry aims to pioneer a quantitative approach to assessing student interest in AI-based learning.

“We would ask participants, mainly iSchool undergrads, to go through different kinds of learning trials with an AI copilot, and, in the process, use an eye tracking method to see if anything changes when they’re reading,” Chang explained. Though the study is in early phases, Chang was hopeful the group’s findings would illuminate whether users were bored or engaged when learning with AI.

Another AI-centered project on display was “Is document remediation obsolete? A study of generative AI alternatives,” by Misha Ohri and Michael McQuaid of the iSchool and Erica Braverman of the UT School of Education. Even today, assistive screen readers have difficulty processing PDF files for vision-impaired users. Various developers have tried to leverage AI to solve the screen-reader PDF problem, but has anyone succeeded thus far?

Not really, Braverman says, although we are getting closer to a fix. Her group tested several apps available on the market; one, which relies almost exclusively on AI, made bizarre mistakes, including hallucinating an entire image that just wasn’t there in the original PDF. The app that tested best for screen-reader conversion accuracy used an AI-human teaming approach. “We found that the tool that worked the best had the least automation,” Braverman said. “It had the most human attention focused on fixing things, but it got the best results.”

Various projects on display touched on the areas of libraries, archives, and preservation, subjects where the iSchool has been a national research leader for decades. For example, four iSchoolers – Qianzi Cao, Haley Triem, Kurt Lemai-Nguyen, and Dr. R. David Lankes – along with Margo Gustina of the University of New Mexico contributed the poster “Understanding AI Readiness in Public Libraries.” The study used a questionnaire, focus groups, and a nationwide survey to assess how public libraries are using AI.

The researchers found that 32.6% of participants reported using AI tools in library work, most often in document processing and writing assistance. Concerns tended to be primarily ethical and professional – including accuracy concerns – rather than technical. In fact, to the extent that librarians wanted AI training and support, it was most often in how to use the technology responsibly. Lankes, the Virginia and Charles Bowden Professor of Librarianship has written extensively on libraries and AI, and recently discussed his key takeaways with us.

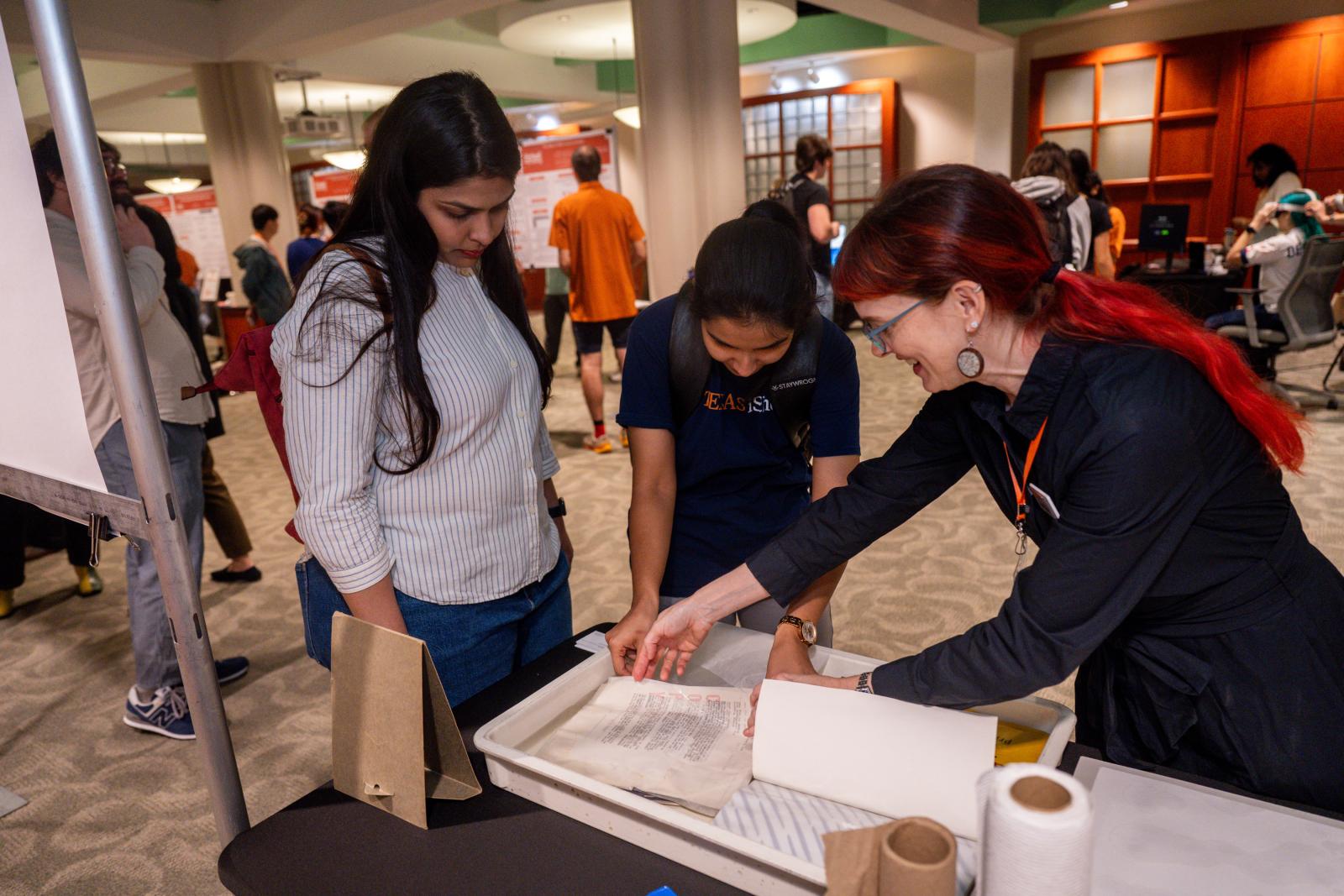

Meanwhile across the exhibition hall, Professor Sarah Norris contributed both a demonstration on preservation of water-damaged papers and books and a related poster: “Preparing for the Worst: Cultural Heritage Risk Assessment for Catastrophic Events.” Norris is hoping to take a hard look at the current approach used by memory institutions like libraries and museums to assess risk from catastrophic events. She is concerned first that current risk assessment formulas are not based on empirical evidence, and second that they may need adjustment in an age of increased environmental risks.

Norris says her project is in the earliest stage, that of seeking a collaborator from another related field. “I’m looking for statistics people, computing people, or other information people who want to help me see what I don't know,” she says. “I know the preservation part, but the numbers behind this might not make any sense as they are, and they certainly might not scale up very well.”

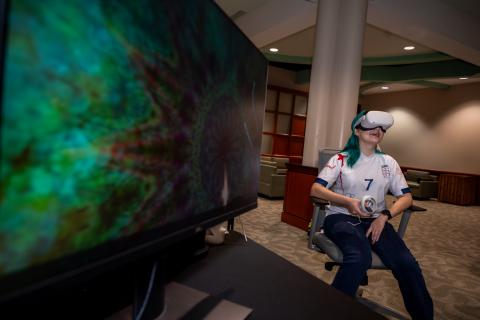

Probably the most popular table in the exhibition hall belonged to the demonstration “Using Virtual Reality as a Therapeutic Tool for Youths’ Mental Health and Wellbeing,” led by Isabella Schloss and Dr. Andrew Dillon from the iSchool, along with Aarushi Gupta of the UT Children’s Research Center. This project collects user perspectives VR technology in mental health from both clinicians and children aged 10-15.

Showcase attendees lined up to sample one of the commercially available guided-meditation VR experiences used in the study, involving breathing along with a figure of light as it sends sparkles towards and away from the user, against a 3-D background of palpitating flowers and lily pads. “I’m focused on using research to better society, and I'm particularly interested in bettering children's lives,” Schloss said. “I'm device-agnostic, but to think about a tool like VR being able to help improve kids' mental health and well-being is what's exciting to me and motivates this work.”

To finish up the Showcase, the crowd headed back into the Quadrangle Room to hear from another trio of iSchool professors – Brian McInnis, Earl Huff, Jr. and Steven Slota – along with moderator Ying Ding, for the panel “Civic Discourse and AI for Access, Education, and Memory.” Once again, the rapid adoption of AI was a top concern for those onstage.

McInnis, whose research focuses on civic deliberation on and offline, bemoaned the way that AI can be misused to replicate a familiar outcome while avoiding the procedural steps where deeper understanding is unlocked. ]

“A lot of the use of generative AI recently is this race to some outcome we're modeling after,” McInnis noted. “But the process, and the tacit knowledge associated with conducting that process, is the human intelligence that's lost when it when there is pure reliance on generative AI.”

Huff, who studies the use of AI in computer science education, took a limited view of how AI should be used in the classroom in particular. “I see AI as a tool, much like someone uses a calculator to calculate a problem that they can already do by hand,” Huff said. “I see AI in that same realm – it's an augmentation of the learning.”

Slota, who is working towards a conceptual framework of generative AI as a memory practice, took a wide-angled view of how we use generative AI, asking those who’d come to the Showcase to imagine how social scientists two or three decades in the future might discuss the societal values of our day as reflected in our algorithms.

“What does our current generative AI say about us now?” Slota asked the assembled scholars and visitors – a question that lingered in the mind after the event had ended. “How are we inscribing and embedding our values, our priorities and our goals into generative AI?”

|

|

|

|

|

|